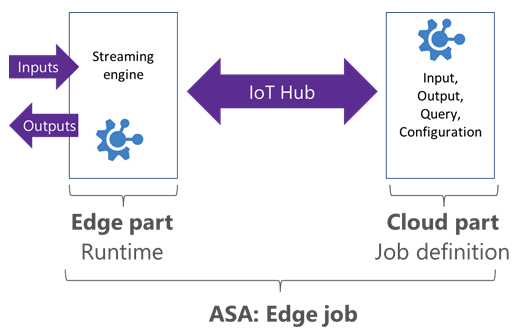

Azure Stream Analytics guarantees exactly-once event processing and exactly-once delivery of events, so events are never lost.

Exactly-once processing

Exactly-once processing guarantees the same results for a given a set of inputs and vital for repeatability, and applicable when restarting the job, or with multiple jobs running in parallel on the same input. Azure Stream Analytics guarantees exactly once processing.

Exactly-once delivery

Exactly-once delivery guarantees means events are delivered to the output sink exactly once, so there is no duplicate output. This requires transactional capabilities on the output sink to be achieved.

Azure Stream Analytics guarantees at-least-once delivery to output sinks, which guarantees that all results are outputted, but duplicate results may occur. However exactly-once delivery may be achieved through the outputs such as Cosmos DB or SQL.

Output supporting exact-once delivery with Azure Stream Analytics

Cosmos DB

Using Cosmos DB, Azure Stream Analytics guarantees exactly-once delivery. No transformations required by the user.

SQL

With SQL, exactly-once delivery can be met if the following criteria is met.

- all output streaming events have a natural key,

- the output SQL table has a unique constraint (or primary key) created using the natural key

Azure Table

All entities in an Azure Storage Table are uniquely identified by the concatenation of the RowKey and PartitionKey fields. To achieve exactly-once delivery, each output event must have a unique RowKey/PartitionKey combination.