What are Bots?

What are Bots?

Bots are designed to solve common business problems using a natural, conversation-style approach combined with Machine Learning for Advanced Intelligence.

What are Chatbots?

A chatbot is a service, powered by rules and sometimes artificial intelligence, interacting with via a chat interface. (Skype, Microsoft Teams, Facebook messenger, Slack, Telegram)

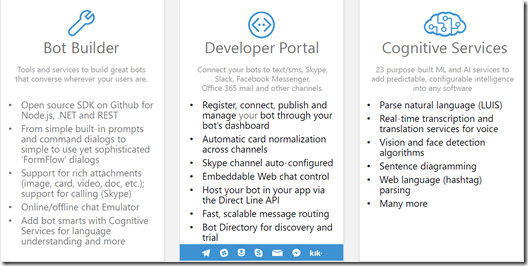

Microsoft Bot Framework

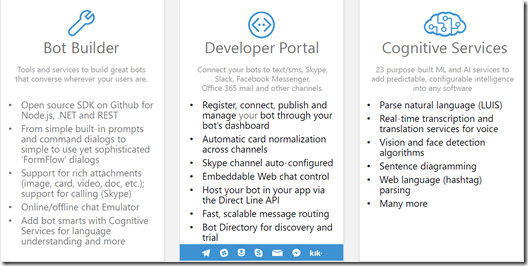

The Microsoft Bot Framework enables organisations to build and deploy intelligent bots and provides tools to build and connect intelligent bots that interact naturally wherever the users are talking, from text/sms to Skype, Slack, Office 365 mail and other popular services.

The Bot Framework provides tools to easily solve these problems and more for developers e.g., automatic translation to more than 30 languages, user and conversation state management, debugging tools, an embeddable web chat control and a way for users to discover, try, and add bots to the conversation experiences they love.

The Bot Framework has a number of components including the Bot Connector, Bot Builder SDK, and the Bot Directory.

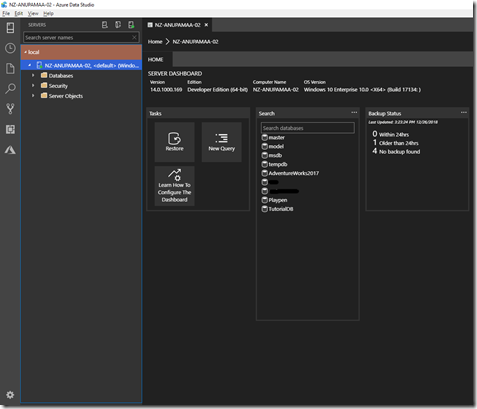

Creating your first Bot using Visual Studio 2017

Make sure the following

pre-requisites are downloaded and installed successfully.

a) Bot Framework Emulator (

https://github.com/Microsoft/BotFramework-Emulator)

b) Download the Bot Application Template from

http://aka.ms/bf-bc-vstemplate and download the BotApplication.zip file and copy it to the Visual Studio Templates folder for C# (The location of the Templates folder can be identified using the below screenshot)

Creating your first Bot

Creating your first Bot

- Open Visual Studio 2017 as Administrator and click on “New Project” and select “Bot Application”

- The Bot Application Project creates a fully functional bot named “Echo Bot”

- Press F5 to confirm that the “Echo Bot” application runs successfully and you see the following screenshot.

- Now let us invoke the EchoBotApplication endpoint using the Microsoft Botframework Emulator.

- Just click on the “Connect” button and start typing the message “Welcome to your first Bot Application” and the bot will respond with the no. of characters of the input text.

- Click on the message sent and you can see the corresponding JSON that was sent to the Bot and the same way you can see the JSON for the response also.

- Now you have created your first bot.

Let us see how can we create more real world bots in subsequent blog posts.